Can Qualtrics Power an Experimental Chatbot? How AI is Built for a Singaporean University Professor

When Professor Georgios Christopoulos from NTU asked whether Qualtrics could be used to build a modular experimental chatbot platform that can run a study, the short answer was:

Yes.

But not in the way most people think.

Qualtrics alone is not a chatbot engine.

What it is, however, is one of the strongest experimental orchestration platforms available today.

At WunderWaffen, this is exactly the kind of system we design: platforms where research control, AI behavior, and data integrity matter more than flashy demos.

Below is how the entire platform described in NTU’s brief would be built — step by step. All steps are simplified and tweaked as platform details cannot be publicly disclosed.

Short summary:

- The Qualtrics study is housed in a display web app with iframe, and receives ID information in embedded variables.

- The participant interacts with web app.

- He is assigned an ID and timestamps.

- He communicates with LLM API to get a response.

- Display response and conversation history in backend.

- Post ID info to Qualtrics.

- Data is sent to Google Sheet.

Step 1: Use Qualtrics as the Experimental Backbone (Not the Chatbot)

The first design decision is philosophical:

Qualtrics controls the experiment.

The chatbot executes the experiment.

Qualtrics is responsible for:

- Participant recruitment and consent

- Random assignment to experimental conditions

- Pre- and post-interaction surveys

- Timing control and session IDs

-

Final data aggregation

Each participant is assigned one chatbot condition only (e.g. Chatbot 101–107). The chatbots, as labelled:

- Chatbot 101 – Baseline (Neutral & Informative): Provides direct, no-frills answers. Objective tone, minimal personality cues. Balanced and serves as a control.

- Chatbot 102 – High Tangibility: Feels like a present, physical system. Uses structured, mechanical language (e.g., “Executing forecast module…”), avoids emotional or conversational tone.

- Chatbot 103 – High Transparency: Focuses on clearly explaining its internal reasoning. Frequently includes phrases like “Here's how I reached that conclusion…” and uses decision trees or logic summaries.

- Chatbot 104 – High Anthropomorphism: Friendly, casual, and human-like. Uses small talk, emojis (if appropriate), personal expressions, and emotional tone.

- Chatbot 105 – High Reliability: Shows consistent reasoning patterns and response styles. Echoes earlier statements, reinforces stable logic, and avoids contradictions.

- Chatbot 106 – High Immediacy: Fast, responsive, and adaptive. Uses short, reactive replies and adapts its tone and suggestions based on user input in real-time.

- Chatbot 107 – Low Reliability: Occasionally displays signs of malfunction or inconsistency. May produce errors such as "An error occurred, please retype your question", or offer slightly contradictory advice across similar inputs. Designed to test user tolerance and trust under degraded AI performance.

From Qualtrics:

- The participant is redirected into the chatbot interface

- The assigned condition ID is passed securely

- When the task ends, the participant is returned to Qualtrics

This preserves clean between-subjects experimental design.

Step 2: Build a Web-Based Chatbot Interface (Participant Mode)

The chatbot itself lives in a web app (or web interface), embedded in or launched from Qualtrics. The chatbot is controlled via system prompt. Conversations are logged for analysis.

From the participant’s point of view, it feels simple:

- Read a scenario

- Chat with an AI

- Make decisions

- See outcomes

From a system perspective, everything is tightly controlled.

The interface supports:

- Text-based chat

- Buttons and sliders for decisions

- Display of images and charts

- Optional avatars or voice (if required)

No app downloads.

Desktop and mobile friendly by default.

Step 3: Implement Personality & Preference Identification

Early in the interaction, the chatbot asks lightweight, conversational questions to understand how the participant thinks.

Participants first see this on-screen message:

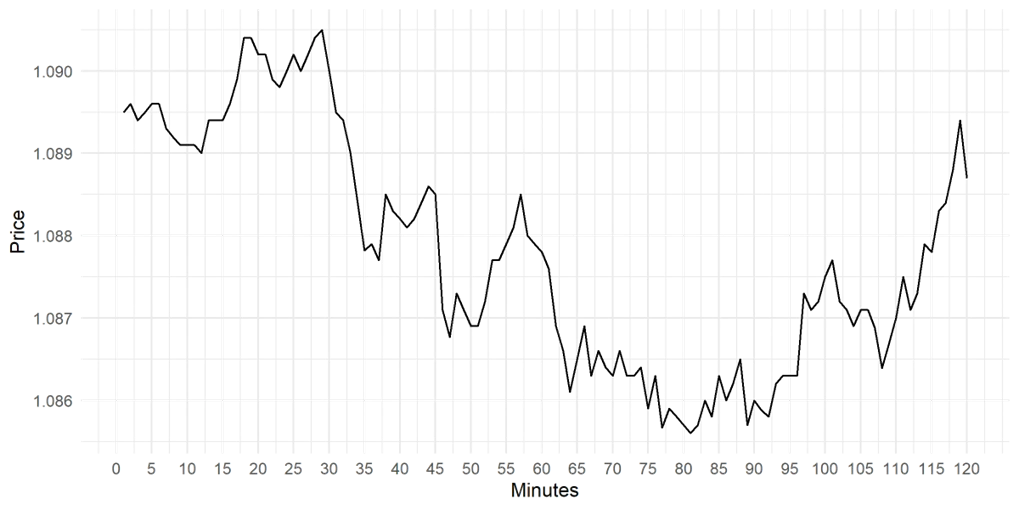

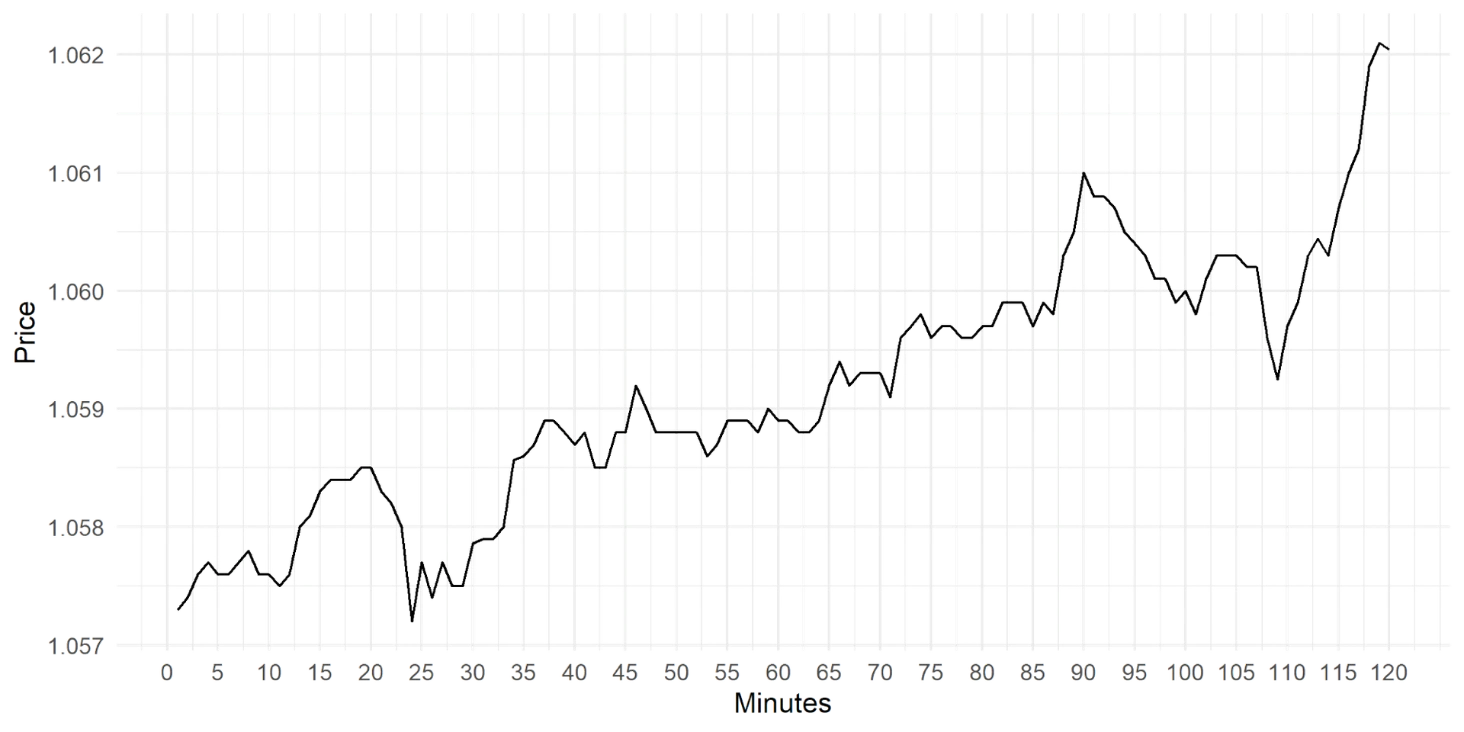

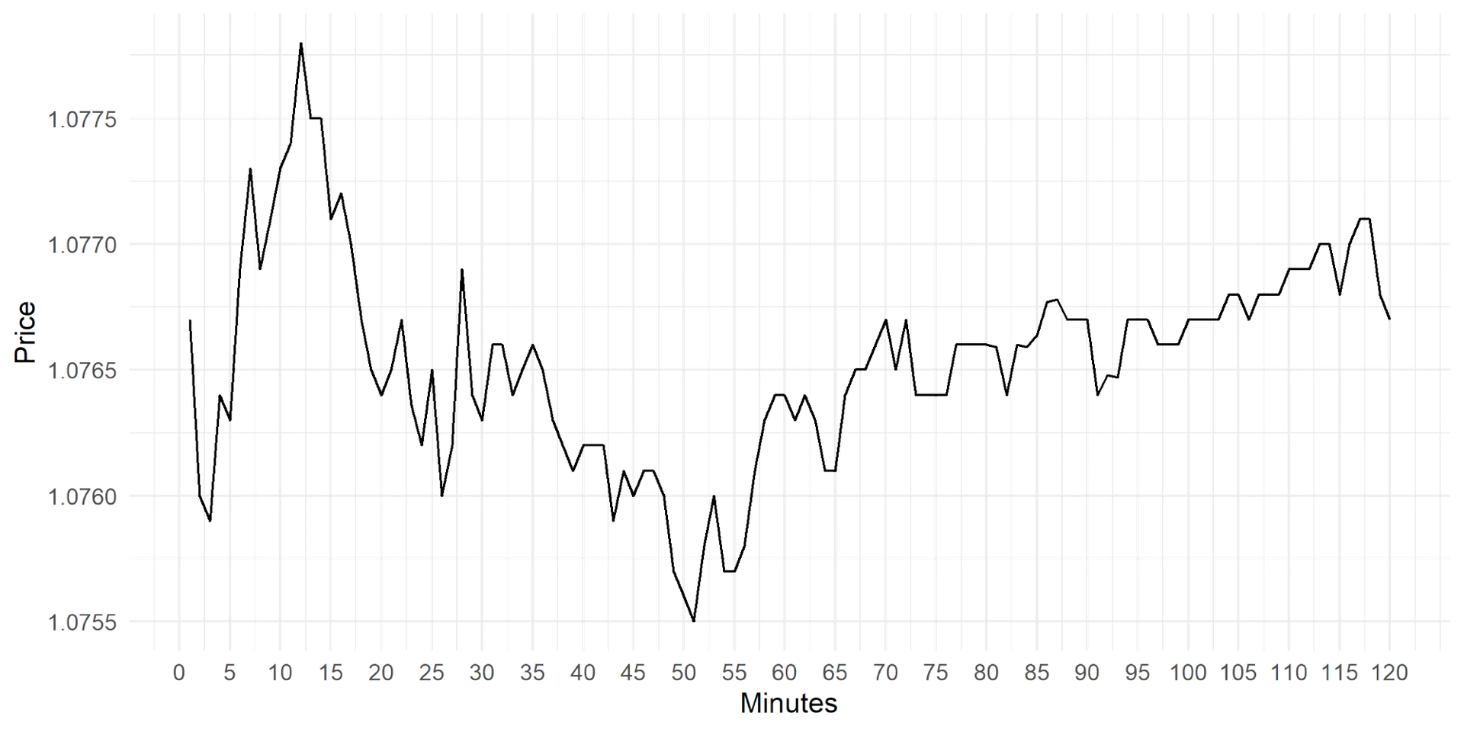

| “Welcome and thank you for participating in our experiment! In this phase, you’ll be asked to make a quick financial decision. Imagine you have $10,000 that you need to allocate across three different stocks. You're aiming for a short-term return — let’s say you need the money for an unexpected opportunity tomorrow (e.g., a last-minute payment, a flash investment opportunity, etc.). Below, you’ll see the performance graphs of the three available stocks. 👉 Your task: Talk to the ChatBot to help figure out how to allocate your funds across these stocks. The ChatBot may ask you a few questions to get a sense of your profile and preferences. You have five minutes to explore the options — but don’t rush! Take the time to discuss and ask the ChatBot questions. It may offer useful advice based on your goals and how you interact with it. 💡 Tip: The ChatBot you’re speaking with will also assist you in the next part of the study. This is a great chance to see how it works and decide how much you want to rely on its advice later on.” These are the three graphs: Graph 1

Graph 2:

Graph 3:

|

These questions assess:

- Risk tolerance

- Decision style

- Comfort with uncertainty

This is not a survey — it’s part of the conversation.

Behind the scenes:

- Responses are analyzed using NLP

- A temporary user profile is created for the session

- The chatbot adapts how it speaks, not what condition it is

This keeps the experimental manipulation intact while improving realism.

Step 4: Deliver the Financial Interaction Task

Next, the participant enters the Interaction Task defined by the researcher.

For example:

- A stock price chart is displayed

- The participant predicts Up (⬆️) or Down (⬇️)

- They enter an investment amount

The UI is intentionally simple:

- Clear buttons

- Minimal cognitive load

- No financial expertise required

Every input is timestamped and logged.

Step 5: Activate the Chatbot Behavior Engine (The Core of the Experiment)

This is where the platform becomes truly experimental.

The Chatbot Behavior Engine determines:

- How the AI explains itself

- How confident it sounds

- Whether it feels human, mechanical, or unreliable

- How it responds to “why?” or follow-up questions

Each participant experiences one predefined chatbot variant, such as:

- Neutral & Informative

- High Transparency

- High Anthropomorphism

- High Reliability

- Low Reliability (controlled inconsistency)

These behaviors are not hard-coded.

They are defined by researchers using:

- Structured prompts

- Rule sets

- CSV-based instructions

- Or a simple authoring interface

Step 6: Generate AI Recommendations (Without Breaking the Experiment)

The chatbot provides a recommendation:

- Up or Down

- With an explanation aligned to its assigned condition

Explanations may reference:

- Simplified market logic

- Predefined reasoning templates

- Or controlled randomization

Crucially:

- Participants can accept or ignore the advice

- The AI never forces an outcome

This preserves the study’s focus on trust, reliance, and understanding.

Step 7: Capture Final Decisions and Compute Outcomes

Participants confirm:

- Their final choice

- Their investment amount

The system then:

- Determines correctness

- Calculates profit or loss using predefined rules

- Displays feedback clearly and neutrally

This feedback is informational, not evaluative.

Step 8: Repeat Across Multiple Rounds (If Required)

The same task can be repeated across multiple rounds (e.g. 35).

This enables longitudinal analysis of:

- Learning effects

- Trust calibration

- Changes in reliance on AI advice

All rounds are logged separately but linked to the same session ID.

Step 9: Researcher Backend (Authoring & Control)

Researchers access a Researcher Mode dashboard where they can:

Configure Chatbots

- Define tone, confidence, formality

- Select or modify chatbot variants (101–107)

- Upload response templates via CSV

Build Tasks

- Upload financial scenarios

- Attach images or charts

- Define interaction constraints

Monitor Data

- View session progress

- Export clean datasets (CSV / JSON)

- Track interaction metadata and timing

No coding required.

Step 10: Full Data Logging & Export

Every action is recorded:

- Participant inputs

- Chatbot outputs

- Timing, order, and duration

- Special events (e.g. errors or apologies)

Qualtrics stores survey data.

The chatbot platform stores interaction data.

Both datasets can be merged cleanly using shared IDs.

Why This Works (And Why Most Setups Fail)

Most attempts to “build chatbots in Qualtrics” fail because they:

- Treat Qualtrics as a chatbot engine

- Hard-code AI behavior

- Lose experimental control

This architecture works because:

- Qualtrics handles experimental rigor

- The chatbot handles human-like interaction

- Researchers retain full control

- Developers retain modularity

Final Answer to NTU

Yes — Qualtrics can absolutely be used to build this platform.

But only if it is used as:

- The experimental spine

- Not the conversational brain

At WunderWaffen, this is exactly the kind of system we specialize in:

AI platforms built for research, not demos.